Station X Saturn Opportunities

TL;DR

This document looks into how Station could take on the benchmarking and load testing for Saturn L1 nodes. It analyses it based on certain criteria such as:

- Saturn requirements

- Station node requirements

- orchestration requirements

- Verifiability/provability

Status Quo Process Flow

The Saturn team tests their network from the user perspective in the following way.

- The Arc service worker gets hold of the top IPFS gateway CIDs by making requests to the following endpoints: https://cids.arc.io/top-cids?limit=500 (how often is this list updated?)

- The service worker randomly selects a CID from this list weighted by how popular the CID is. This is to preserve the distribution of requests in the organic traffic.

- The service worker then makes a request to IPFS and Saturn with the chosen CID.

- On each response, it creates a benchmark record with the following fields.

| Field | Description |

|---|---|

| service | IPFS or Saturn |

| cid | The chosen CID |

| url | The request URL |

| transferId | saturn-transfer-id header, fallback to x-bfid header |

| httpStatusCode | Status code of the response |

| httpProtocol | quic-status header |

| nodeId | saturn-node-id header, fallback to x-ipfs-pop header |

| cacheStatus | saturn-cache-status header, fallback to x-proxy-cache |

| ttfb | new Date() timestamp when response arrives |

| ttfbAfterDnsMs | Difference between window.performance.resource.responseStart and window.performance.resource.requestStart |

| dnsTimeMs | Difference between window.performance.resource.domainLookupEnd and window.performance.resource.domainLookupStart |

| startTime | new Date() at start of request invocation |

| endTime | new Date() at end of response handling |

| transferSize | Size of response body |

| isError | Did the request error out? |

| isDir | Is the CID addressing a directory instead of a file? |

| traceparent | Open Telemetry Traceparent made using the transferId header |

- The service worker then sends 1 or more requests in to the Saturn network to increase the network load.

- The service worker then reports the benchmark record into a benchmark report URL (AWS) as well as a log ingestor URL (also an AWS endpoint), which writes them into Postgres DBs. There is currently no fraud detection to make sure the values in the benchmark record are not fraudulent. Fraud is conducted on the Log Ingestor DB

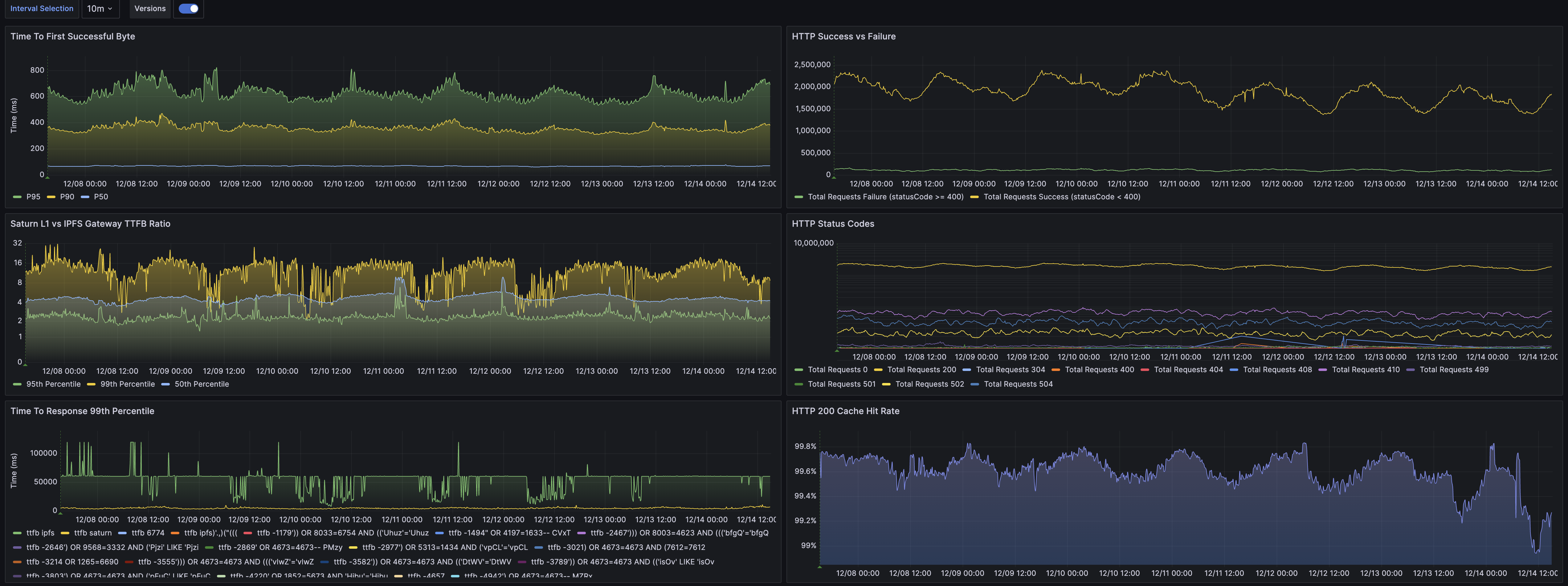

- You can view aggregated metrics of the Postgres DBs in this dash

Opportunity: Station nodes as network testers

Station nodes can replace Arc service workers to perform these Saturn network tests and measurements.

Pros

- Save on costs of running the Arc network. Will Arc continue to exist post Saturn nucleation?

- Saturn becomes independent of the Arc network.

- Users who are running the Arc service worker are not aware that they are testing the Saturn network. Using Station means no more clandestine network testing/monitoring.

Cons

- Station operators are not trusted. They may try to game or defraud the system as everything is now in the open.

- The Station network is not yet as large or geo-distributed as the Arc network.

Questions

- Once Saturn has enough clients will the benchmarking and load come from real Saturn clients, integrated with the Saturn service worker, rather than synthetic clients like Arc and/or Station, thus making this project redundant?

Requirements

Saturn

Load

- Saturn currently serves 140TB a day. The majority of this traffic is from Arc service workers.

- Saturn serves 300M requests per day, again mainly from Arc service workers.

Reduce costs

- Arc is expensive for Protocol Labs and Saturn may not wish to take on this cost centre through nucleation

Geo-distribution

- Saturn requires clients to make requests from a wide range of geo-locations so that it can make accurate claims about its TTFB worldwide

Station

Nodes

- Station server and desktop nodes

- Same requirement as Spark.

Network

- Enough nodes in the network to create the requisite amount of traffic.

- Enough geo-distribution.

Orchestration

- For simple retrieval tests to measure TTFB, TTLB etc, we can simply ask all Stations to make requests to the Saturn network and measure the results.

- For load testing, we may need more coordination between Stations in a particular region, although the current Arc solution doesn’t do any orchestration here. We will need to get Station node geo in place.

Verifiability/Provability

- Arc service workers are not fully aware that they are making these requests and so I assume people are not trying to alter the requests. Also Arc nodes do not earn for their requests to measure Saturn so there is no incentive to game the system.

- Station nodes may try to earn from this module by spinning up multiple nodes across VPS. They will also see how they can tweak the protocol in order to earn without doing all the work.

- We can use Spark’s proof of data possession to ensure that the Station operator has fetched the entire file. Verifiability around TTFB and TTLB is unsolved.

- The results from these network tests could be used by Saturn’s fraud detection module.

Attack vectors

- J: When Saturn operators discover this connection, they will be incentivized to add cheating Station nodes, in order to create retrieval results that will lead to higher Saturn rewards. This helps grow the Station network, but puts more pressure on fraud prevention strategies.

- M: At the moment, Station retrievals (SPARK, Arc-replacement) use different user agent than Saturn does. SPs can use this information to provide better service to our checker module.

- Discussion about user agents: https://github.com/filecoin-station/rusty-lassie/pull/78

Work Plan

Phase 1: Benchmarking Module PoC

Starts: January 2024

Concludes: M4.1: 2024/02/09

Station team ships a Saturn benchmark module that runs the Arc service worker logic but only performs and submits the benchmark records, not the bandwidth logs which are used to calculate contributions and therefore payouts.

We add a field to each benchmark record to show which records are from the Station module and which are from Arc.

Benchmarks do not contribute to the Saturn payouts whereas the bandwidth logs do. The benchmarks are reported into the Benchmark DB and used to show how Saturn compares to the IPFS Gateway. On account of this, we believe that there is no incentive for Station operators to alter the records. Specifically, if all these benchmark records do is contribute to the Saturn dashboard, it will mean that Station nodes will want to report correctly: the more accurate Saturn’s dashboard is, the more likely Saturn is to improve its service, the more likely more customers onboard, the more likely Saturn pays funds into this module.

Success Criteria

- Voyager charts are within a 5% discrepancy threshold with the existing Arc charts

- We should go through each chart individually and assess if we think it is good enough from Voyager.

Phase 2: Benchmarking Module with Payouts

February 2024

Pricing: $1500 per month

Station team rolls out full impact Evaluator for Saturn benchmarks and Saturn team start paying into this smart contract. Voyager can use the Spark CID sampling algorithm with committees.

At this point we can discuss winding down the Arc network running benchmarks. However, in the Arc service worker, these requests are also used for the fraud detection and rewards for Saturn nodes. Submitting the benchmarks into the Log DB (like how it is done in the Arc service worker) would open up an attack vector as any L1 could run Station nodes and report false stats about other L1s or about themselves to boost their payouts. We would need to discuss this in depth with the Saturn team. My view is that in the mid to long term, benchmarking from Arc/Station shouldn’t contribute to payouts anyway because it is synthetic. All payouts should come from Saturn service workers integrated in real client websites.

Phase 3: Load Testing PoC

March 2024

The Arc service worker creates a huge amount of load for the Saturn network. From what I can tell these requests do not contribute to the Saturn operator payouts. Once there are enough Station nodes, we can look into load testing the Saturn network.

This will require dynamic taking as the module will need to reduce and increase the load per Station based on the rise and fall of total number of Station nodes. If too many nodes are in one geography, we will also need to spin alter the number of load requests.

Phase 4: Load Testing with Payouts

April 2024

Pricing: TBC

Once we have the orchestration in place to do effective load testing, we can link it up to a Meridian smart contract.

Pricing

TL;DR

First month (Phase 1) is free.

Intro

For Station to be an attractive option to Saturn, Station needs to compare well against products like Pingdom. We believe Station is far better suited based on the following factors

- Number of PoPs

- Compatible with Content Addressing

- Far more URL (or CID) checks for the same price.

Pingdom

As an example, for $1500 a month on Pingdom, you can get

- An uptime check for 2500 URLs

- 200 advanced test (this is page speed and transaction tests)

- 1000 SMS alerts

Uptime Check

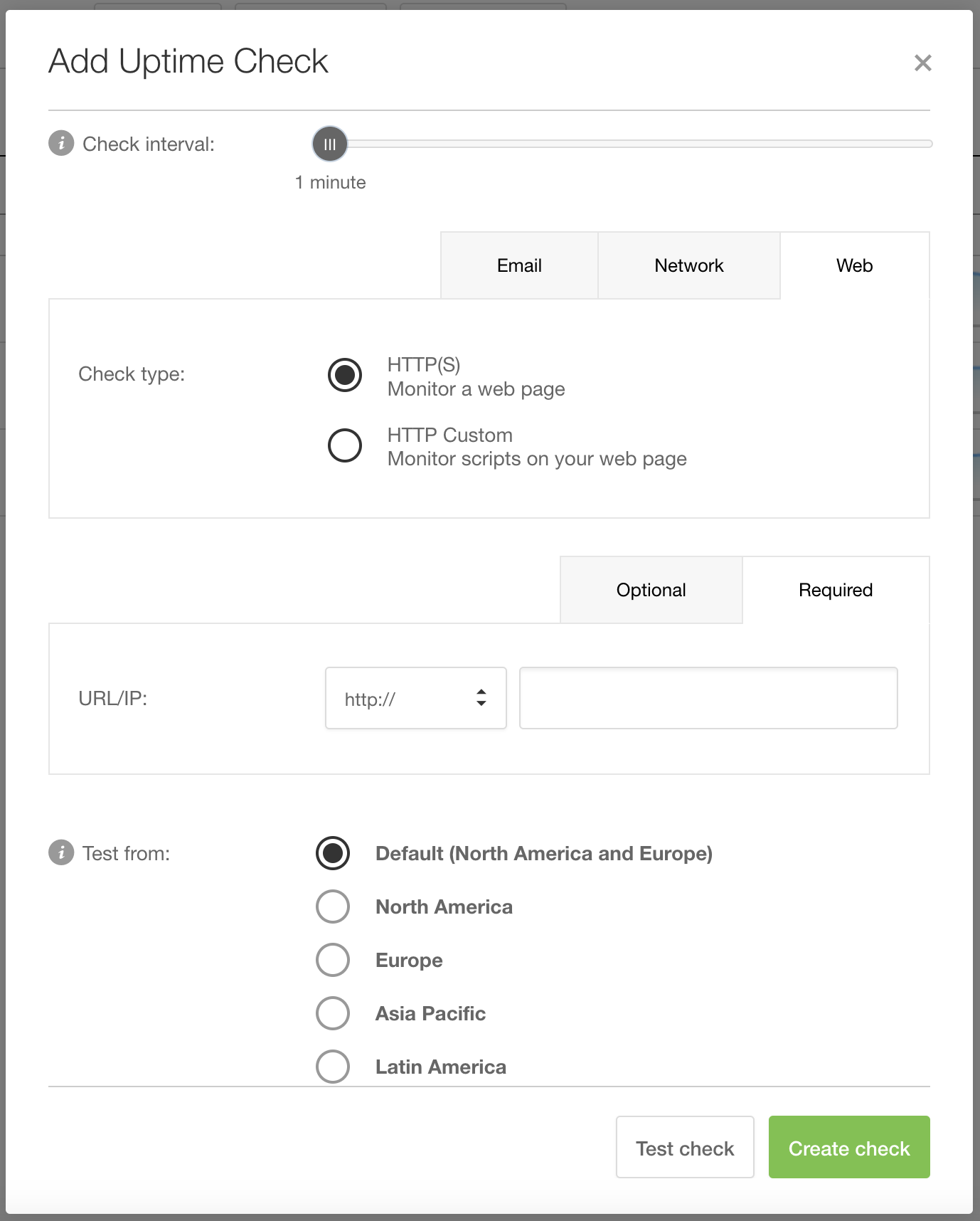

An uptime check input form looks as follows:

Advanced Check

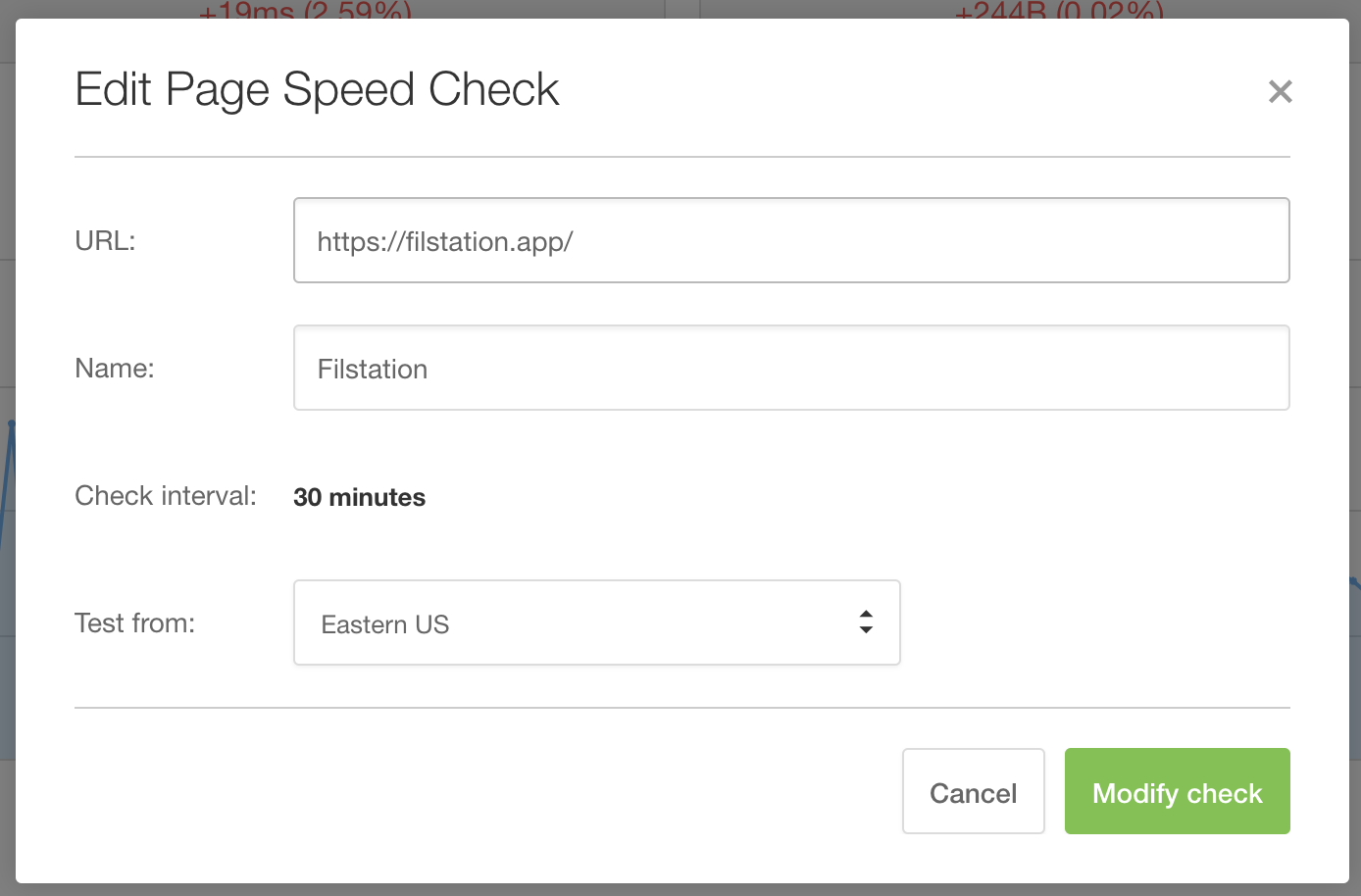

An advanced check takes place every 30 mins and you can only pick one location from which to make the checks. The form to set up a speed check looks like this:

For Saturn’s use case on Pingdom, each CID check would constitute a different URL and TTFB falls under the advanced test category. This means for $1500 a month, you would be able to test 200 fixed CID urls from one location, which we believe isn’t sufficient for the use case.

Station

For $1500 a month (in FIL) on Station, you can

- Test the Saturn Network from all geolocations, i.e. measurements from all active Station nodes.

- 1,000,000 measurements per hour.

- Integration with existing Saturn benchmarking DB.

- Direct access to the Station team 😉 for integration and future features.

Where does this go?

- The Station team takes a 10% cut ($150). This helps us to run the services.

- Fixed costs of running a Meridian Impact Evaluator per month is ~$150.

- The remaining ~$1200 is split between Station operators based on their contribution.

Comparison Table

| Station | Arc | Pingdom | |

|---|---|---|---|

| PoPs worldwide | >12000 | ? | 100 (Source) |

| Requests from all geolocations | ✅ | ✅ | Only one location per test |

| Integration into Saturn Infra | ✅ | ✅ | ❌ |

| Check Interval | User defined | User defined | 30 minutes |

| OSS | ✅ | ❌ | ❌ |

| Content addressing friendly | ✅ | ✅ | ❌ |

| Rewards checkers | ✅ | ❌ | ❌ |

End