Station Module: L1 Uptime Checker

Background

The Station team has recently launched Station core and the Zinnia runtime. The team is now looking for a good first module with the following requirements:

- It will lead to lots of Station downloads (it helps if it makes sense on people’s desktops)

- Station operators will be able to earn FIL sustainably (i.e. clear business model)

- Not too complicated to engineer

- (Bonus) Alignment with the rest of the RM Lab

| Module | Works on Desktop (needed to get maximum # of early stations) | Clear Business Model | Engineering Complexity (relatively) | Aligned with RM Lab efforts |

|---|---|---|---|---|

| Bacalhau | Maybe | Unclear | Unclear | No |

| Punchr | Yes | No | Medium | No |

| L1 uptime checker | Yes | Saturn needs this but not on high priority | Lowest | Yes |

| L1 hardware requirement checker | Maybe - but how do you get to 10gig check? | Saturn needs this but not on high priority | Medium | Yes |

| SP retrieval checker | Yes | Filecoin needs this | Low | Yes |

| L2s for pinning | No | Too early to say | High | Yes |

The clear winners are the L1 uptime checker and the SP retrieval checker. We have chosen to go for the L1 uptime checker first because it is more straightforward than the SP retrieval checker and can be viewed as a first milestone towards the SP retrieval checker.

It is also super aligned with the membership management category of the Saturn Goes Web3 Work.

L1 Uptime Checker Protocol Proposal

Status Quo

Currently the Saturn orchestrator pings each Saturn L1 node every 5 minutes to check that it is still online. If not, it removes its DNS record.

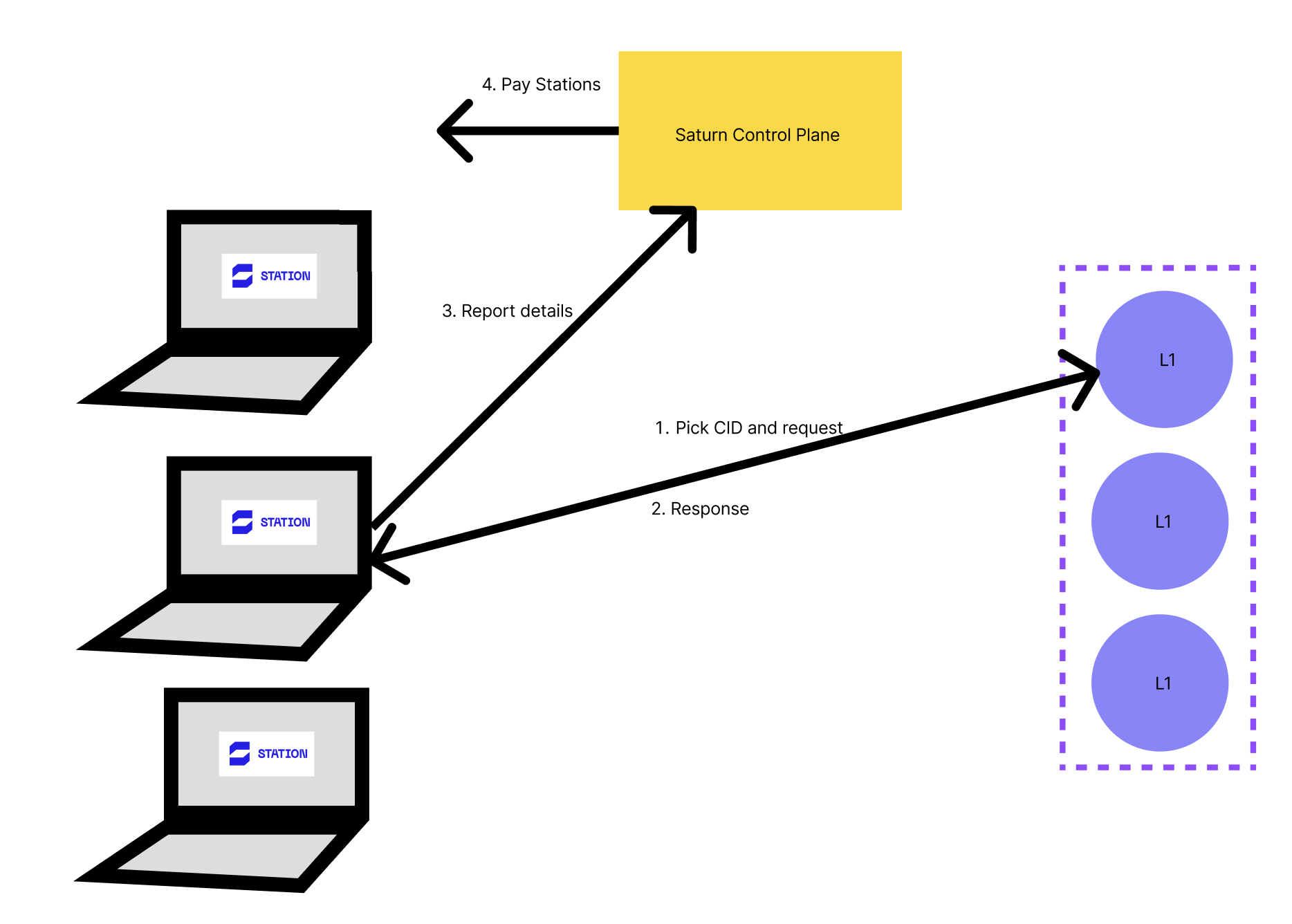

Simplest PoC

- The module will periodically pick

(CID, L1 address)to test.

- The module attempts to fetch the CID content from the L1.

- The outcome is reported to a centralised service.

- The Saturn treasury puts aside a certain amount of FIL that is used for rewarding uptime checkers. At the end of each epoch, the checkers are rewarded.

Advanced Trustless Protocol Draft

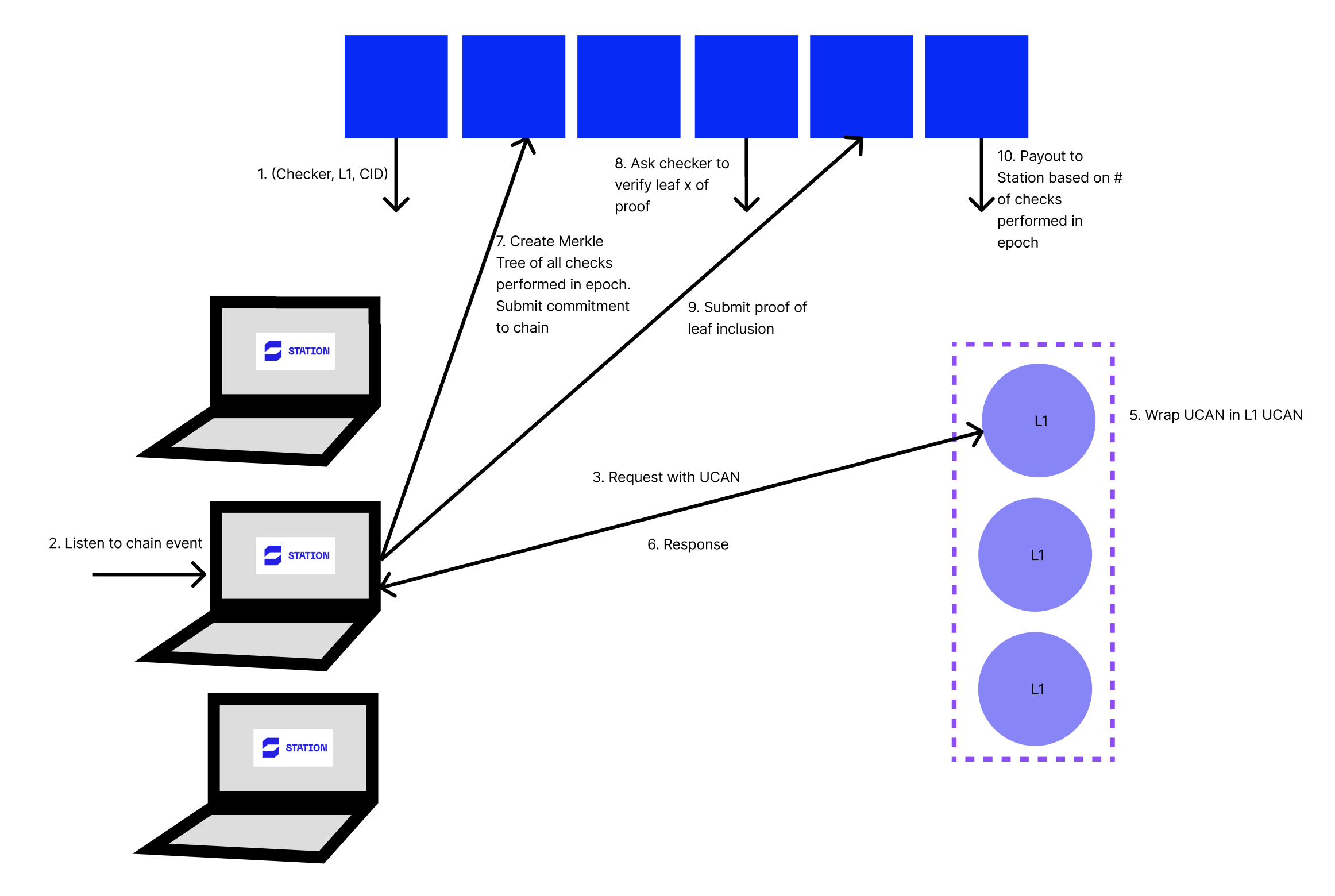

Phase 1: Trigger

- Both the set of L1s and the set of Uptime checkers are listed on-chain.

- At a regular interval, a tuple

(uptime checker, L1, CID)is chosen by the checker contract.

- The module listens out for tuples which include them as the checker and then they set about performing an uptime check on the L1 node.

Phase 2: Request Flow

- The module adds a UCAN to its request and fires it off to the L1.

- The L1 receives the request and wraps the UCAN in its own UCAN and returns the response.

- The Checker module wraps the UCAN in its own UCAN again and stores the result in the case of a success.

- In the case of a no-response, i.e. the L1 is not up, then the checker must report this to the Saturn Orchestrator. (Attack vector possible here)

Phase 3: Proof

- The checker creates a Merkle tree root (equivalently KZG commitment) of all checker requests and submits on-chain. This commitment transparently includes the number of leaves (requests) that the checker has made.

Phase 4: Verify

- Every so often, the smart contract will select a proof and a leaf number to verify from across the set of submitted proofs. (It could do this for every proof.)

- The checker listens out for this on chain event. If they are selected to provide a proof, they must provide a Merkle proof (equivalently KZG proof) of inclusion in the tree for that leaf node.

Phase 5: Payment

- The checker is rewarded with the FIL equal to the number of checks multiplied by the price per check.

This flow reduces the amount of traffic and storage used on chain whilst using randomness to ensure that nodes do perform every check and include every proof in the commitment, just in case it is checked in the verify flow.

Inspiration from Pocket Network Flow

Notes

Roadmap

- 🟢 IPFS Thing: Simple PoC

- 🎯 Q3 2023: Advanced Trustless Protocol

Appendix

SP Retrieval Checker Proposal

- The module will periodically pick

(cid, address, protocol)to test, wherecidis ID of the content stored by the SP,addressis the address of SP’s content provider node,protocolis “bitswap” or “graphsync”

- The module attempts to fetch the CID content from the address using the selected protocol.

- The module reports the outcome.

Implementation details:

- The first step can be initially implemented using a centralised service operated by PL or Filecoin Foundation. This service will provide an API endpoint to obtain the job definition triple.

- Reports can be initially submitted to the same service too.

- Later we can research ways how to make this more decentralised.

I think this may have a relatively straightforward way towards incentivisation:

- The money for rewards can come from the person making the storage deal or maybe from the storage fee itself.

- I think we can get reliable proof of a job performed if we combine a random id associated with the job definition triple plus Drand for time-based randomness plus the content being retrieved and calculate a unique hash from that. We can also record the time when the retrieval check result was submitted to ensure the node performed the check around the time when Drand generated the random value.